Fabulous

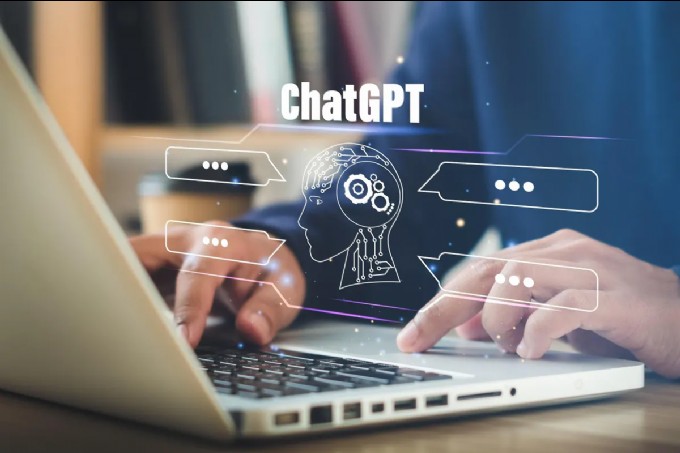

Understanding the Inner Workings of AI Chatbots: How Do They Generate Text?

From acing exams to aiding in crafting emails, ChatGPT has showcased its natural-sounding text production abilities. However, it's crucial to explore how these AI chatbots work and why they sometimes provide accurate answers while at other times, miss the mark completely. Let's take a peek inside the box.

The technology behind large language models like ChatGPT resembles the predictive text feature on our phones. It predicts the most likely words based on what has been typed and past user behavior. But unlike phone predictions, ChatGPT is generative and aims to create coherent text strings spanning multiple sentences and paragraphs. The focus is on producing human-like output that aligns with the given prompt.

To determine the next word and maintain coherency, ChatGPT relies on word embedding. It treats words as mathematical values representing different qualities, such as complimentary or critical, sweet or sour, and low or high. These values allow for precise identification of words within a vast language model.

During training, ChatGPT is exposed to massive amounts of content, such as webpages, digital documents, and even entire repositories like Wikipedia. The model learns by predicting the missing words in sequences and adjusting its word qualities based on the revealed answers. This training process takes time and requires substantial computational resources.

ChatGPT undergoes an additional training phase called reinforcement learning from human feedback. Human evaluators rate the model's responses, helping it improve coherence, accuracy, and conversational quality. This step enhances the model's output, making it safer, more relevant, and aligning it better with human expectations.

Latest News

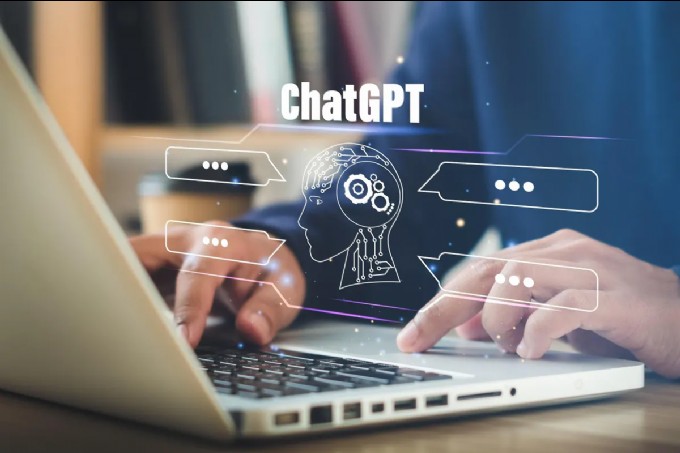

From acing exams to aiding in crafting emails, ChatGPT has showcased its natural-sounding text production abilities. However, it's crucial to explore how these AI chatbots work and why they sometimes provide accurate answers while at other times, miss the mark completely. Let's take a peek inside the box.

The technology behind large language models like ChatGPT resembles the predictive text feature on our phones. It predicts the most likely words based on what has been typed and past user behavior. But unlike phone predictions, ChatGPT is generative and aims to create coherent text strings spanning multiple sentences and paragraphs. The focus is on producing human-like output that aligns with the given prompt.

To determine the next word and maintain coherency, ChatGPT relies on word embedding. It treats words as mathematical values representing different qualities, such as complimentary or critical, sweet or sour, and low or high. These values allow for precise identification of words within a vast language model.

During training, ChatGPT is exposed to massive amounts of content, such as webpages, digital documents, and even entire repositories like Wikipedia. The model learns by predicting the missing words in sequences and adjusting its word qualities based on the revealed answers. This training process takes time and requires substantial computational resources.

ChatGPT undergoes an additional training phase called reinforcement learning from human feedback. Human evaluators rate the model's responses, helping it improve coherence, accuracy, and conversational quality. This step enhances the model's output, making it safer, more relevant, and aligning it better with human expectations.

Jennifer Lopez looks ageless in a towel in no-makeup video

Amanda Holden spanks her derriere and thanks Spanx

Amanda Holden shows off more than bargained as she dances around in her outfit of the day

Meet Harley Cameron, the stunning model who went from a BKFC ring girl to become a pro wrestler and found love

GreenGirlBella, Rocks Emirates Stadium in Painted Home Kit

Amanda Holden calls herself a 'good girl' in white dress with 'cheeky' split

Mum slammed by parents after flashing thong in school run outfit

Lottie Moss makes jaws dropp as she shows off her flawless body

Amanda Holden wears nothing beneath plunging white dress

Comments

Written news comments are in no way https://www.showbizglow.com it does not reflect the opinions and thoughts of. Comments are binding on the person who wrote them.